Effective Exploratory Testing (Special Series): Empower Your Exploratory Testing With Smart Tools (1)

Part 1: Effective Exploratory Testing (Part 1): Some good practices

Part 2: Effective Exploratory Testing (Part 2): More Effective With Pair Exploratory Testing

Part 3: Effective Exploratory Testing (Part 3): Explore It, Automate It

Part 4: Effective Exploratory Testing (Part 4): A Practice of Pair Exploratory Testing

Part 5: Effective Exploratory Testing (Part 5): Risk-based Exploratory Testing

Special Series 1: Effective Exploratory Testing: Empower Your Exploratory Testing With Smart Tools (1) (Reading)

Special Series 2: Effective Exploratory Testing: Empower Your Exploratory Testing With Smart Tools – A Case Study (2)

Context-Driven Testing Framework: Effective Exploratory Testing: Context-Driven Testing Framework

Scripted Testing (Traditional Testing) in Agile & DevOps world:

While we are living in a multidimensional context manner, why we are testing our applications with predefined scripts (static context) which are written in a form of test-cases? – the ones that are derived from requirements, specifications and “process” – that James Bach called it “Scripted Testing”.

Agile has been moving fast and transforming very much recently. In the last couple of years, we have heard of TDD, BDD, or Scrum, Lean, Kanban and now DevOps. In this era, Scripted Testing becomes heavier and more inadaptable. Using scripted testing in an agile manner like a marathoner in a jet suit on the race. Consequently, Scripted Testing is obsolete and is being replaced by other more suitable methodologies. Context-Driven Testing is one of them. The numbers below illustrate big transformations in the Testing world.

So, what is Context-Driven Testing?

It is a way of differentiating testing which is dependent on its context from that which is not. Exploratory testing pays a great deal of attention to context. Many people who write about, teach, and push the boundaries of exploratory testing have identified themselves with the Context-Driven School – but it’s by no means an exclusive relationship.

Exploratory Testing was coined by Cem Kaner, it is most often used to describe an approach to testing. If we are using exploratory testing, we are typically designing our tests as we run them, and learning from the results to design more.

The differences between exploratory testing and scripted testing can be identified as follows

| Scripted Testing | Exploratory Testing |

| • Is directed from elsewhere

• Is determined in advance • Is about confirmation • Is about controlling tests • Emphasizes predictability • Emphasizes decidability • Like making a speech • More … |

• Is directed from within

• Is determined in the moment • Is about investigation • Is about improving test design • Emphasizes adaptability • Emphasizes learning • Like having a conversation • More … |

Exploratory Testing in Sessions:

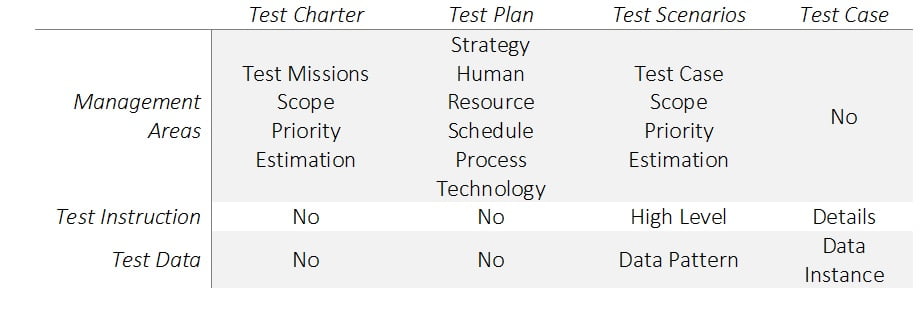

- Test Charters: Test Charter states the mission of tests and some of the testing tactics. Test Charter records decisions on what and how to test the target software. Test Charter is neither a test plan, test scenarios, nor test cases. Test Charter does not come in details, defining strictly instructions for test executor. Test Charters break the complete testing objective into narrow missions with a defined scope for exploration. Test Charter helps the tester to focus during the test execution and managing of the tests.

- How to perform the exploratory testing: Exploratory testing can be organized and executed in timebox (60’-120’) which is called test session (session-based testing). A session is carried out with a test charter by one or many testers. During session execution, testers need to record a few things that help them to show others how to find their discoveries and to review and report their findings. Below are recommendations for records, but it is not limited to

- What they did

- What they saw, observed

- Questions, new ideas

- Challenges, blocks

- Quality of test charter

- Bug findings

Challenges in the Exploratory Testing as proofs to build One2Explore:

We did not begin our project to build One2Explore with supposibility. We sought to build a solution from the ground up, derived directly from challenges encountered into our work.

- Hard to measure its efficiency: Although exploratory testing is well managed by sessions, it is still hard to answer the question “how efficient is the session which has been conducted?”. Even when having many things have been noted for debriefing later on, reviewing all these notes and understanding them in the right context is difficult.

- Hard to review/ debrief: As a part of session-based testing procedure, debriefing & reviewing results are taken right after a session is executed. The purposes of this activity are to:

-

- (1). Evaluate the findings

- (2). Learning from the testing

- (3). Analyze test coverage

- (4). Compare the result vs the test charter

- (5). Check whether any additional testing is needed.

However, it is a great challenge for reviewers with a bunch of notes recorded in 60’-120’. They are usually thoroughly detailed which takes much time and make debriefing & result reviewing become cumbersome or they only complete it at a high level of documentation that is very ineffective.

-

- Replicate failures is hard: Sometimes, when an abnormality encountered, you find yourself in knowing where it comes from by some reasons. You may be lost your track from the testing. Or “Your dogs that did not bark”, it means what you have tracked is simply not supporting the problem replication.

- Hard to know exactly what a tester was doing in their sessions and how & why s/he did it

Graph Your Exploratory Testing as a Solution

Visual aid is more powerful than any other method, we find ourselves easily in explaining everything by charts, lines, circles, squares. With current trends of software development methods, instead of documenting requirements in hundreds of pages or planning projects with dozens of pages, now, many teams are using Scrum-board and Mind-map as tools to visualize their plans, their project progress, and their project requirements. To the given challenges of Exploratory Testing, the solution identified is to track all data of what has been taken by testers and visualize them in forms which is helpful for further quality & quantality analysis and easy to convey messages about testing quality. We recognize that using graphs as a method to display test execution and relevant info is an approach that helps the exploratory testing productivity.

- Building Graphs: All testers’ actions generate a graph. Imagine you are testing a portion of SUT (Software Under Test) which is chartered into a mission as “Explore how to compose with file attachments”. You come up with a lot of scenarios like “compose an email having its content with one document file attached”; “compose an email having its content with one media file attached”; “compose an email having its content with one or more large-size file attached”,… Each scenario is executed with a series of your actions being interacted with the App. These actions are building a graph where each screen represents as a node and actions (like submitting or navigating) are creating edges to link nodes. An agent which is installed in the tester’s machine to record all actions and to initiate & build the graph for current test executions.

- Record relevant data: Not only to record tester’s activities, but it is also necessary to capture other data which are used for analyzing the efficiency of your exploratory testing. Data is, then, coded into categories as:

- Internal Data is all visible elements that compose the screen or a page. Usually, they are DOM elements like buttons and its properties, hyperlinks and its properties, …

- External Data is all invisible elements and behavior of the screen or a page. Usually, they include parameters, timing request & response, the time when the screen is being interreacted with….

- Store Exploratory Execution: All nodes, edges, and Data are stored in Graph Database. Data represents as values of a node

- Visualize Exploratory Testing: Your execution is graphed. This graph with nodes are screens associated with its URL, edge with an indicator as step number. This graph helps review testing execution easily. A Test Lead can capture fast and easily what has been done by testers and their test ideas.

- Build Master Graph to increase the efficiency: Visualizing test executions by graphs (for a charter) is to help debriefing and reviewing tests efficiently. This answers quickly what testers have done; what s/he covers with his/her testing. The set of these graphs are merged into one bigger one which is called “Master Graph”. Over time, the Master Graph is a compelling evidence of the test coverage, test ideas, and test results. Additionally, the comprehensive Master Graph, also, helps evaluate how good a test execution was by comparing the Master Graph with the graph which is created by the test execution.

- How to build the Master Graph: This is a problem of identifying common graph isomorphism. The implementation of our application is described briefly as follows:

- Reviewing test execution more efficiently with graphs: As of results from building the graphs, reviewers (test lead, experienced testers who can be involved into the brief session) can compare current test execution with a particular test execution (can be executed by more senior testers). Differences between two executions which are visualized in graphs help acknowledge and understand context generated during the testing.

- Comparing the Master Graph with active graph created by current test execution to find out missing scenarios and to recommend new test ideas. By the comparison of the Master Graph and the active one, we are able to point out missing branches from the active graph. Those missing branches are all scenarios which may be missed by the tester in the current execution. With the Master Graph and set of prior recorded steps, the reviewer is able to re-procedure scenarios created in the previous test execution in the same charter. This can be found in our case study which is going to published next week. On the other hand, also by this comparison, it identifies new test ideas which have not been built in the Master Graph. Those ones are immediately integrated into Master Graph to use for further analysis for next test execution.

- How to build the Master Graph: This is a problem of identifying common graph isomorphism. The implementation of our application is described briefly as follows:

- Advancing test exploratory by mining test data and recommending test ideas. By with all the data captured during testing, it is the basis for deep analysis to outcome new test ideas. Some context can be built to sharpen exploratory testing as follows:

- Use internal data

- We can spot out what elements have been missed out in the current testing

- We can identify business constraints that are a set of web elements that need to be verified

- We can identify dead links, unreachable objects in a page

- And so on

- Use external data

- We can detect abnormal processes being run along with the application

- We can detect abnormal durations when data is transmitted between client and server

- And so on

- Use internal data

An AI tool cannot test with itself in term of replacing the human, however, it can accelerate a testing, especially in exploratory testing. Testers teach and train the tool to make it smarter, then it brings great supports and power back to the testers. This is a kind of transformation in this AI era.

In the next article, we will present a case study with the benefits and practices of One2Explore at MeU Solutions.