MeU-software testing approach completely effectively and differently

We would begin this story by telling our past before sharing our case study of testing with Client X-Application. From this story, you also can download our guidelines, templates and samples of our approach as well.

Having hands-on experience of about 15 years in Software Testing, during early years when testing in various industries and working on many of application types, we have encountered recurring issues of testing inefficiency. Usually, defects escaped from our testing rooted by surprising context which we could not imagine when deriving our bunch of test-cases from product’s requirements. We have spent thousands of hours only to try documenting as much as detailed for test-cases derived.

Over the time, boring with this kind of work, all of us who are fan of agile philosophy, decided to give up current approach – the approach that we call “Scripted Testing” or “Specification-Driven Testing” which you don’t know what will happen, but you still try to write scripts for your testing. On our journey, we have had chances to talk to James Bach and Paul Holland who are prominent proponents of the approach of “Context-Driven Testing (CDT)”. From this point, we have done extensive research with it, spending much of time working with this approach. Over more than 3 years, we made commitment on its true value. Context-Driven Testing gives us lots of great momentum, sharping our minds to think differently about testing and about product under test. The approach revolves around logical analysis of the context in which prospective users have different preferences. Instead of sitting hours to write boring test-cases, we have paired to explore and to test together. A process of questioning & answering with context given by application. We think of context more logically, refreshing our minds to generate other new test ideas about product. The process continued until we are more confident about product quality.

How we test for a product

This product is kind of eCommerce product, website owner sells all their products, their games, movies from this site. We were given a 3-days for all testing with the website with a team of two testers who, of courses, hands-on experience with Context-Driven Testing.

Initiate Product body of Knowledge

Our team started with establishing their knowledge of product in form of mind-map by using XMind. The image is illustrating a part of our result

The focus is on five elements: Functions, Interface, Platform, Data, Operation and Time. This is as a generic framework to cover as much as possible the product area in which it would be placed in production. These elements were broken into smaller items. Below is a portion of our mind-map for Functions

During the creation of the mind-map, test ideas were placed to confirm product knowledge with the client. This product knowledge is used to generate test charters at a later stage

Conduct Risk Assessment

The numbers you see in this mind-map representative for importance of features. Because we have taken only 3-day testing for this product, we decided to do a quick risk assessment on it, with factors associated with our assessments like

- Who is using this application – Target client is children, sometimes their parents will visit the website to purchase games for their child

- What is action of user – Children come to play/ download games, watch movies, their parents to purchase products

- What is important transaction – While an issue with games may not have a big impact, issues related to purchase error will have serious consequences

- What devices are used to assess – With the context being the US, iPhone, iPad, PC and iMac are the assumed primary devices used to access the site. Children are more likely to use mobile devices such as iPad, iPhone rather than the PC or iMac

- What areas which are used – We would choose Mobile Game as the first priority

- ….

As result of our assessment, we decided to choose Mobile Games feature and it will be tested with iPad and PC as the highest priority

Develop Test Charters

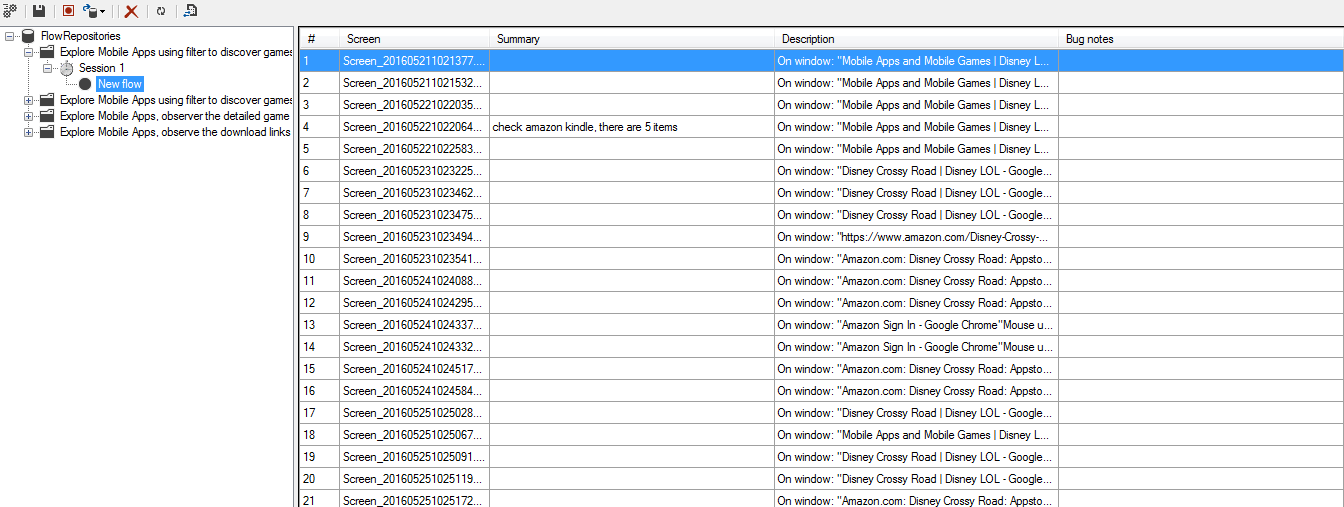

From this point, we began a small time-box session to explore Mobile Games, we have been pairwise doing it. During this session, we tried to question in all context possible scenarios and generated related test ideas. When session completed, two our testers performed a debrief session to analyze the results and determine what need to be tested. We came up with a list of test charters which would be used for execution later as image below

Execute Testing in Sessions

We began pick 4 charters of

- Explore Mobile Apps section, download mobile game/app, play some game and make sure that they are downloadable, workable, the downloaded game information is consistent with the information on website.

- Explore Mobile Apps using filter to discover games are put into right category (available on)

- Explore Mobile Apps, observer the detailed game page information is correct: content/layout are consistency

- Explore Mobile Apps, observe the download links of each game to discover that links are working, and open to right store, and the information of that game on that store is correct.

to perform within four 2-hour sessions. During executing the session, we have been modeling our tests with list of heuristic and oracle. Modeling test helped our team have more test ideas, better evaluate product with given context and more importantly to generate test data in various classifications (or partitions like traditional test calling)

Debriefing and making better for next

Debriefing is needed when any session get done, our team has sit together to talk about what has been tested, what their observations, the defects founds, new test ideas, they also identified missing area in their session. A debriefing will result lessons to make next sessions better. This is also a chance for our testers to share their experiences with the product under test. Debriefing notes will be used for regression test if needed

Inventing a new tool

However, our team recognizes that it was much better if their testing had been recorded and captured all what they went through. Immediately, we decided to develop a utility for this purpose. It was 2-day for its implementations. Our new tool allowed us to record and capture all pre-configured actions like mouse click, mouse move, key enter,.. The tool also facilitates a range of features like taking notes during testing, taking bug’s notes, real-time modifications for any screen captured, reporting in HTML format … Thanks God we have great teams with multiple-skills that are ready to test in any circumstances. You can download this tool (One2Explore) from our website for your testing.

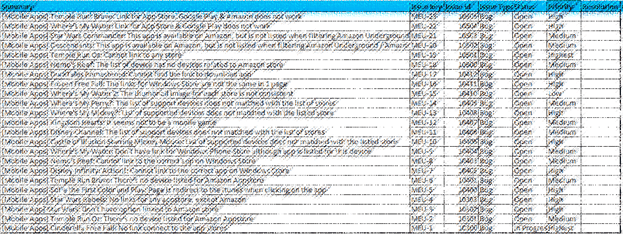

Happy end – A Great Result

After four sessions with eight hours of testing (taking our team 2 days), we have uncovered 28 defects. Some of them, our team believed that they couldn’t find out with old approach

As a summary, our team has spent 3 days with 1 day to imitate Product Knowledge and 2 days for test charters and its execution. 28 defects found with only 4 sessions taken for 4 charters. How faster do you think another approach could be?

Download our innovative exploratory testing tool, and check our effective testing approach here.